Information

# Qwen3-Coder-480B-A35B-Instruct

## Highlights

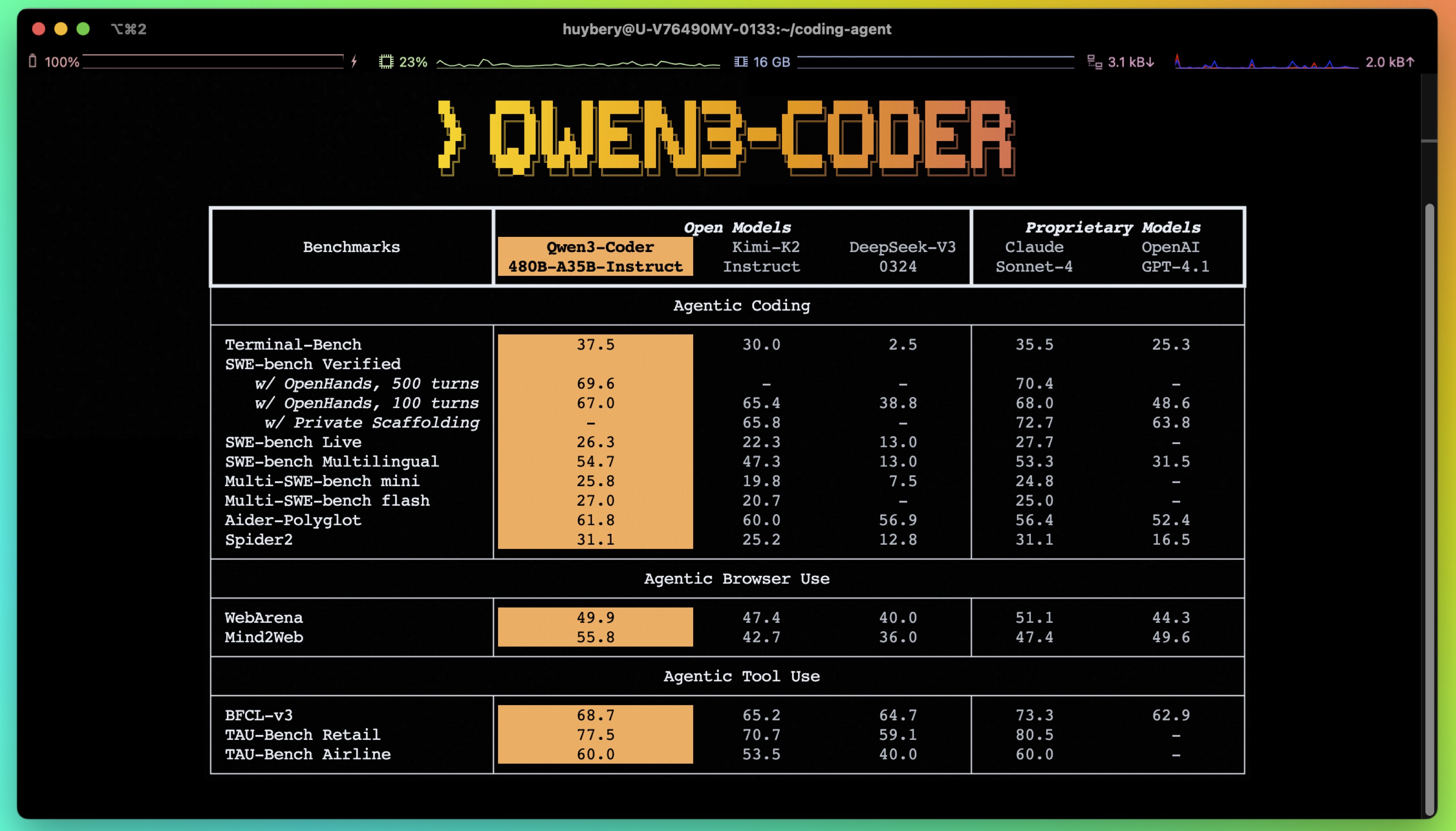

Today, we're announcing **Qwen3-Coder**, our most agentic code model to date. **Qwen3-Coder** is available in multiple sizes, but we're excited to introduce its most powerful variant first: **Qwen3-Coder-480B-A35B-Instruct**. featuring the following key enhancements:

- **Significant Performance** among open models on **Agentic Coding**, **Agentic Browser-Use**, and other foundational coding tasks, achieving results comparable to Claude Sonnet.

- **Long-context Capabilities** with native support for **256K** tokens, extendable up to **1M** tokens using Yarn, optimized for repository-scale understanding.

- **Agentic Coding** supporting for most platform such as **Qwen Code**, **CLINE**, featuring a specially designed function call format.

## Model Overview

**Qwen3-480B-A35B-Instruct** has the following features:

- Type: Causal Language Models

- Training Stage: Pretraining & Post-training

- Number of Parameters: 480B in total and 35B activated

- Number of Layers: 62

- Number of Attention Heads (GQA): 96 for Q and 8 for KV

- Number of Experts: 160

- Number of Activated Experts: 8

- Context Length: **262,144 natively**.

**NOTE: This model supports only non-thinking mode and does not generate \`\`

## Highlights

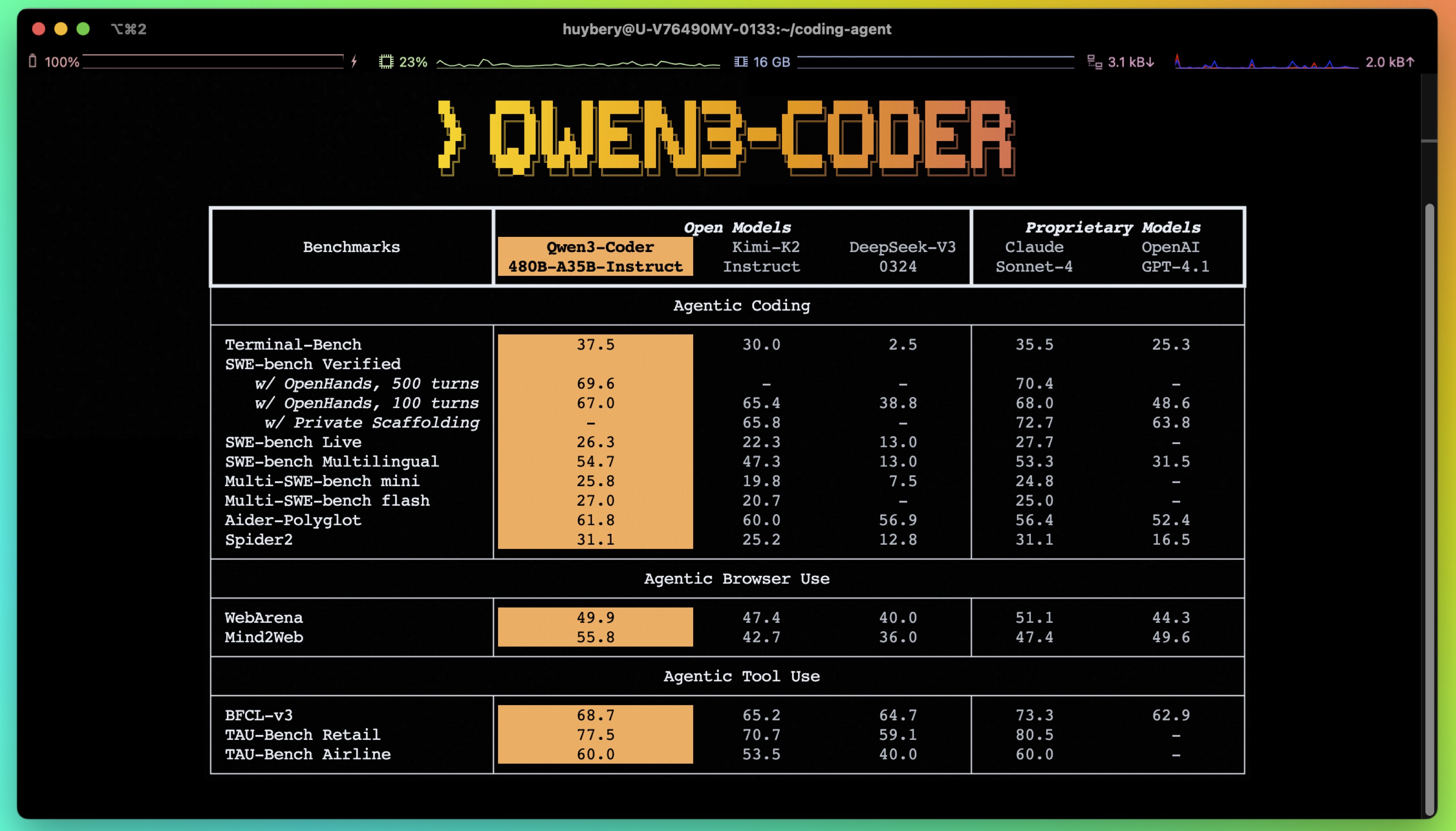

Today, we're announcing **Qwen3-Coder**, our most agentic code model to date. **Qwen3-Coder** is available in multiple sizes, but we're excited to introduce its most powerful variant first: **Qwen3-Coder-480B-A35B-Instruct**. featuring the following key enhancements:

- **Significant Performance** among open models on **Agentic Coding**, **Agentic Browser-Use**, and other foundational coding tasks, achieving results comparable to Claude Sonnet.

- **Long-context Capabilities** with native support for **256K** tokens, extendable up to **1M** tokens using Yarn, optimized for repository-scale understanding.

- **Agentic Coding** supporting for most platform such as **Qwen Code**, **CLINE**, featuring a specially designed function call format.

## Model Overview

**Qwen3-480B-A35B-Instruct** has the following features:

- Type: Causal Language Models

- Training Stage: Pretraining & Post-training

- Number of Parameters: 480B in total and 35B activated

- Number of Layers: 62

- Number of Attention Heads (GQA): 96 for Q and 8 for KV

- Number of Experts: 160

- Number of Activated Experts: 8

- Context Length: **262,144 natively**.

**NOTE: This model supports only non-thinking mode and does not generate \`\`

## Highlights

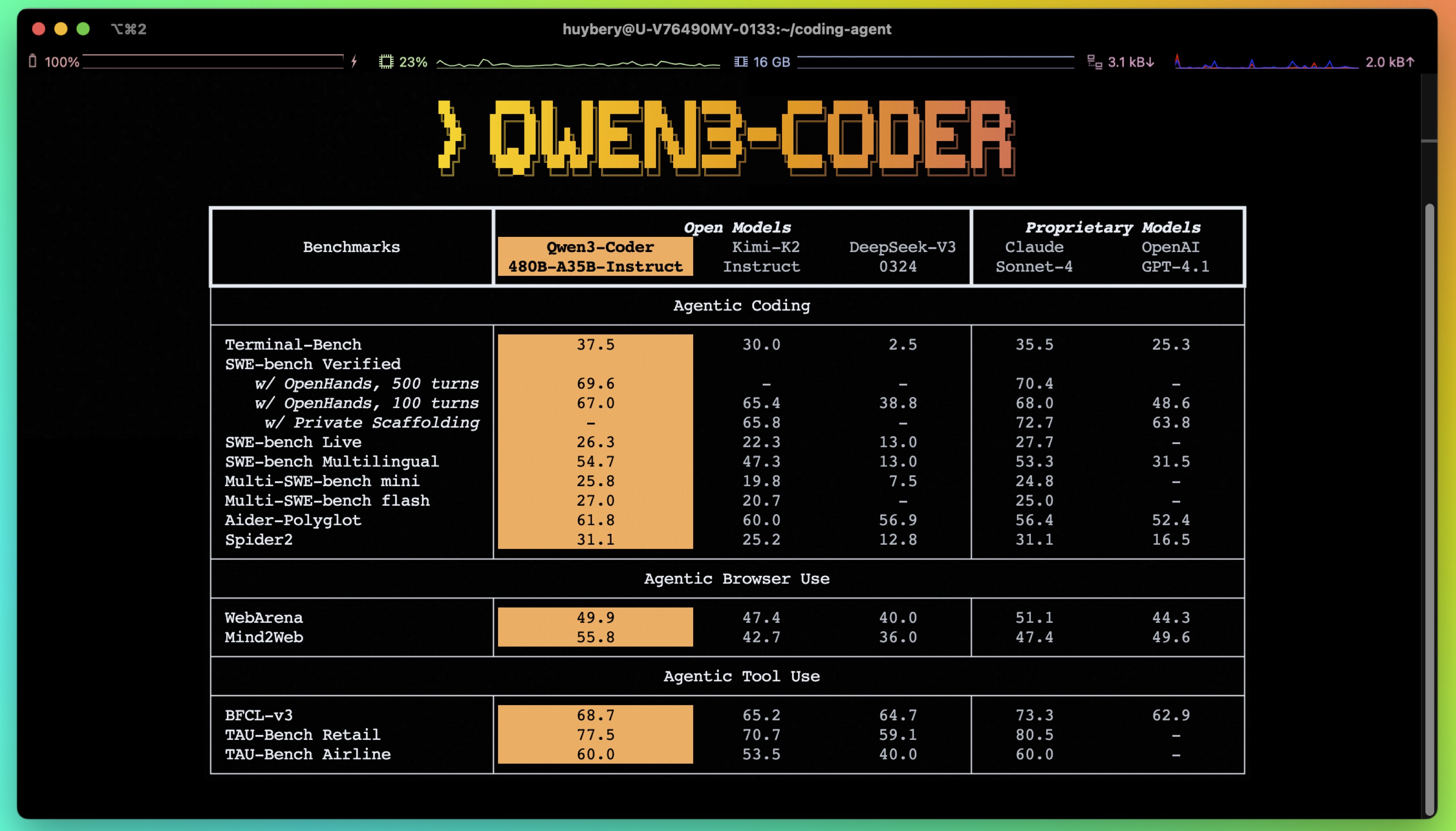

Today, we're announcing **Qwen3-Coder**, our most agentic code model to date. **Qwen3-Coder** is available in multiple sizes, but we're excited to introduce its most powerful variant first: **Qwen3-Coder-480B-A35B-Instruct**. featuring the following key enhancements:

- **Significant Performance** among open models on **Agentic Coding**, **Agentic Browser-Use**, and other foundational coding tasks, achieving results comparable to Claude Sonnet.

- **Long-context Capabilities** with native support for **256K** tokens, extendable up to **1M** tokens using Yarn, optimized for repository-scale understanding.

- **Agentic Coding** supporting for most platform such as **Qwen Code**, **CLINE**, featuring a specially designed function call format.

## Model Overview

**Qwen3-480B-A35B-Instruct** has the following features:

- Type: Causal Language Models

- Training Stage: Pretraining & Post-training

- Number of Parameters: 480B in total and 35B activated

- Number of Layers: 62

- Number of Attention Heads (GQA): 96 for Q and 8 for KV

- Number of Experts: 160

- Number of Activated Experts: 8

- Context Length: **262,144 natively**.

**NOTE: This model supports only non-thinking mode and does not generate \`\`

## Highlights

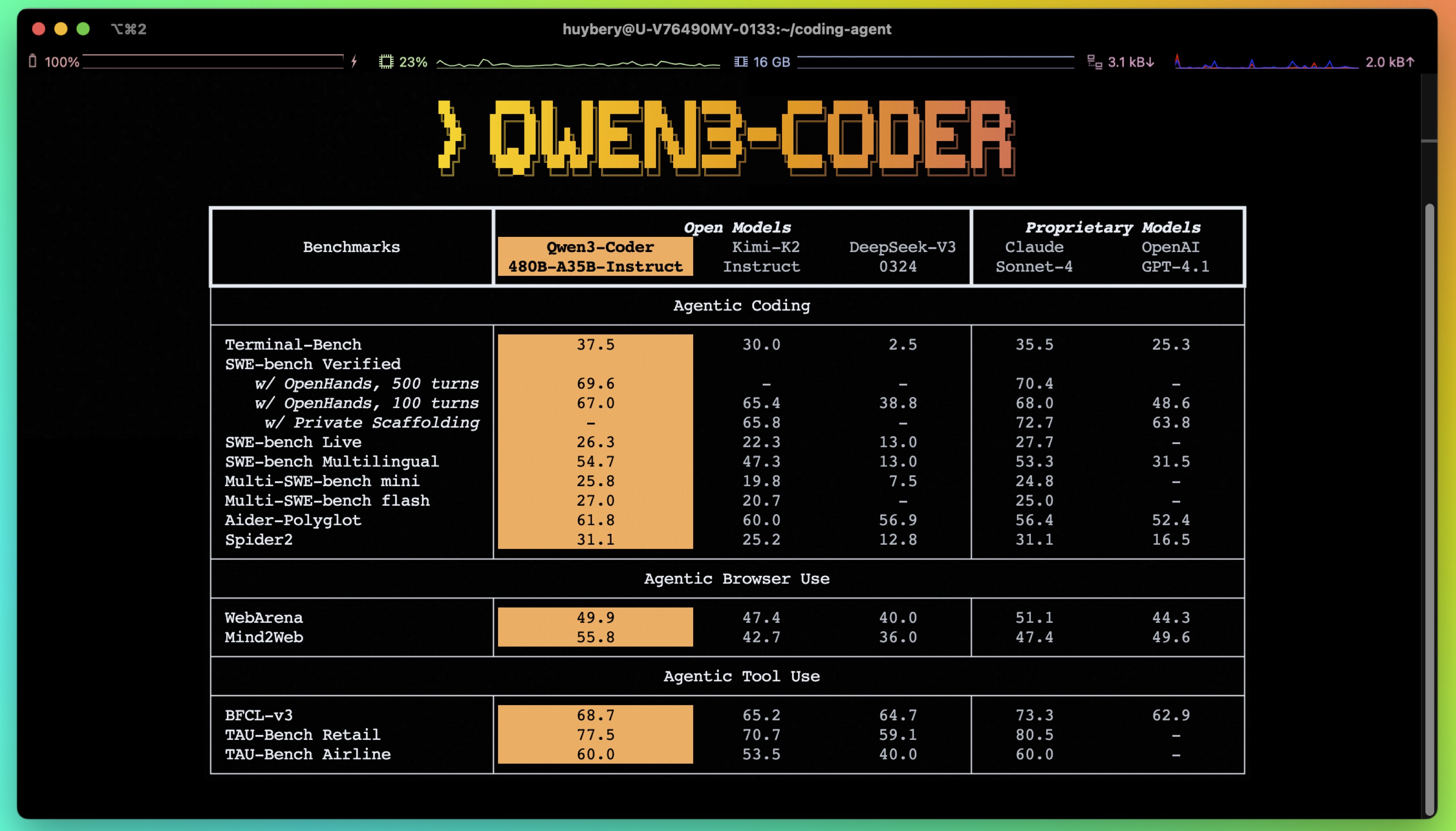

Today, we're announcing **Qwen3-Coder**, our most agentic code model to date. **Qwen3-Coder** is available in multiple sizes, but we're excited to introduce its most powerful variant first: **Qwen3-Coder-480B-A35B-Instruct**. featuring the following key enhancements:

- **Significant Performance** among open models on **Agentic Coding**, **Agentic Browser-Use**, and other foundational coding tasks, achieving results comparable to Claude Sonnet.

- **Long-context Capabilities** with native support for **256K** tokens, extendable up to **1M** tokens using Yarn, optimized for repository-scale understanding.

- **Agentic Coding** supporting for most platform such as **Qwen Code**, **CLINE**, featuring a specially designed function call format.

## Model Overview

**Qwen3-480B-A35B-Instruct** has the following features:

- Type: Causal Language Models

- Training Stage: Pretraining & Post-training

- Number of Parameters: 480B in total and 35B activated

- Number of Layers: 62

- Number of Attention Heads (GQA): 96 for Q and 8 for KV

- Number of Experts: 160

- Number of Activated Experts: 8

- Context Length: **262,144 natively**.

**NOTE: This model supports only non-thinking mode and does not generate \`\`

Reply